Big Data > Spark > c. Hadoop YARN

Hadoop YARN

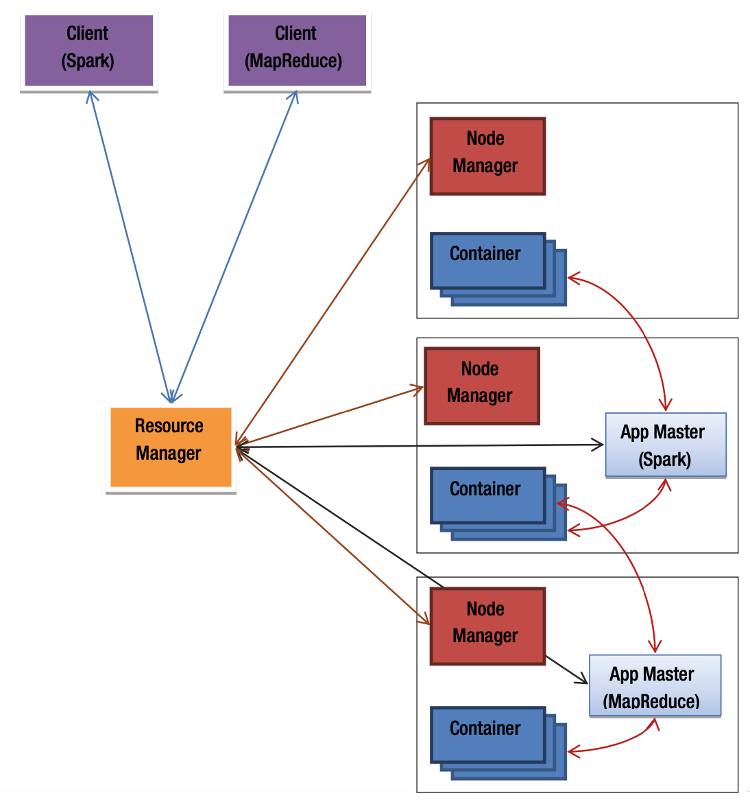

YARN is a general-purpose, open-source cluster manager that was introduced with Hadoop MapReduce version 2.0, where the term "YARN" also became synonymous with this updated version of the Hadoop framework. YARN separates cluster resource management from job execution logic, making it a more flexible and extensible platform for running various distributed computing engines.

Key Features and Architecture

YARN allows not only MapReduce jobs but also other compute engines such as Apache Spark, Apache Tez, and more to run on the same cluster infrastructure. This unified cluster management is made possible by its modular architecture.

Core Components of YARN:

- ResourceManager (RM):

Acts like the master node (similar to Mesos master). It manages and schedules the resources of the entire cluster by receiving resource reports from all NodeManagers.

- NodeManager (NM):

Acts like the worker node (similar to Mesos slave). It manages the resources of a single machine and reports them to the ResourceManager. It also oversees the execution of tasks in containers on that node.

The ResourceManager consolidates resource information from all NodeManagers and allocates those resources to different applications. In essence, it functions as a global scheduler for the cluster.

YARN Application Components

A distributed application running on YARN typically consists of the following three components:

- Client Application:

This is the entry point for submitting jobs to the YARN cluster. For instance, when using Spark, the spark-submitcommand is the client application. - ApplicationMaster (AM):

Each application has its own ApplicationMaster, typically provided by the framework being used (e.g., Spark or MapReduce). The AM is responsible for negotiating resources with the ResourceManager and coordinating task execution with the NodeManagers. It also monitors the progress and health of the job. The AM runs on one of the nodes in the cluster. - Containers:

A container represents a slice of resources (like CPU, memory) on a node. Once the ApplicationMaster secures resource allocations from the ResourceManager, it works with NodeManagers to launch containers across the cluster. These containers run the actual application tasks.

Advantages of Using YARN

- Multi-framework Support: Spark, MapReduce, and other engines can run side by side on the same cluster.

- Resource Sharing: Applications dynamically share resources for better utilization.

- Hadoop Integration: Existing Hadoop clusters can run Spark applications easily using YARN, without requiring separate infrastructure.

Feedback

ABOUT

Statlearner

Statlearner STUDY

Statlearner